When sales teams talk about "scraping data from LinkedIn," what they’re really describing is an attempt to automate the extraction of prospect information from profiles and company pages. It’s a shortcut to a goldmine of B2B data, but it's a high-stakes game that directly violates LinkedIn's terms of service and can get your account shut down fast.

The Realities Of LinkedIn Data For B2B Sales Teams

If you're in B2B sales, you already know LinkedIn is where your ideal customers live. They’re posting about new roles, company wins, and project challenges—all valuable buying signals. The problem isn’t finding these people. The problem is getting their contact data out of LinkedIn and into your sales engagement platform efficiently—without getting banned.

This creates a constant tug-of-war for modern sales organizations. On one side, you have the richest source of B2B data on the planet. On the other, LinkedIn is building higher walls every day to block the very scraping tools teams use to access it. For SDRs and AEs trying to hit quota, it’s a daily struggle.

The Frustration Of Manual and Risky Workflows

To hit their numbers, many SDRs and BDRs are stuck between a rock and a hard place. They’re either bogged down with mind-numbing manual work or resorting to sketchy scraping tools that put their accounts at risk. Neither path leads to predictable pipeline.

- Manual Data Entry: This is the soul-crushing reality for too many teams. SDRs spend hours copying and pasting names, titles, and company details into spreadsheets. That’s not selling; it’s low-value admin work that kills morale and torpedoes productivity.

- Fragile Scraping Tools: To save time, some teams turn to browser extensions or scripts. These tools are notoriously brittle. They break the moment LinkedIn pushes a site update, instantly halting lead flow and leaving the team scrambling.

- Dirty, Unusable Data: Even when scrapers work, the data they produce is often a mess. Your RevOps team is left to clean up an export full of inconsistent job titles, missing information, and unverified contacts—a massive data integrity headache that slows everything down.

The real cost of a broken scraper isn't the subscription fee—it's the lost sales momentum. When your team's primary source of leads suddenly dries up, your entire pipeline is at risk.

A Growing But Treacherous Ecosystem

The demand for LinkedIn data has spawned a crowded and confusing market of tools. We've seen an explosion of options, from heavy-duty scraping infrastructure using proxy rotation to simple Chrome extensions. Some export engagement data from posts, while others focus on building prospect lists.

But this ecosystem is treacherous. By 2026, the market will be saturated, but serious compliance hurdles will remain. Misusing personal data can lead to massive fines under regulations like GDPR and CCPA, forcing sales leaders to second-guess any aggressive automation strategy.

The fundamental issue is that these solutions are just fragmented pieces of a larger workflow. You end up with one tool to scrape data, another to clean it, a third to find emails, and a fourth to run outreach. It’s a patchwork system that’s expensive, inefficient, and constantly at risk of falling apart.

While learning how to use LinkedIn for prospecting is a great start, scaling those efforts demands a smarter GTM platform.

A modern platform doesn't play this risky cat-and-mouse game. Instead of relying on a fragile system to scrape data, it provides direct access to aggregated, verified, and continuously updated B2B data. This allows your team to find better accounts, reach the right people, and convert pipeline faster—without the risk.

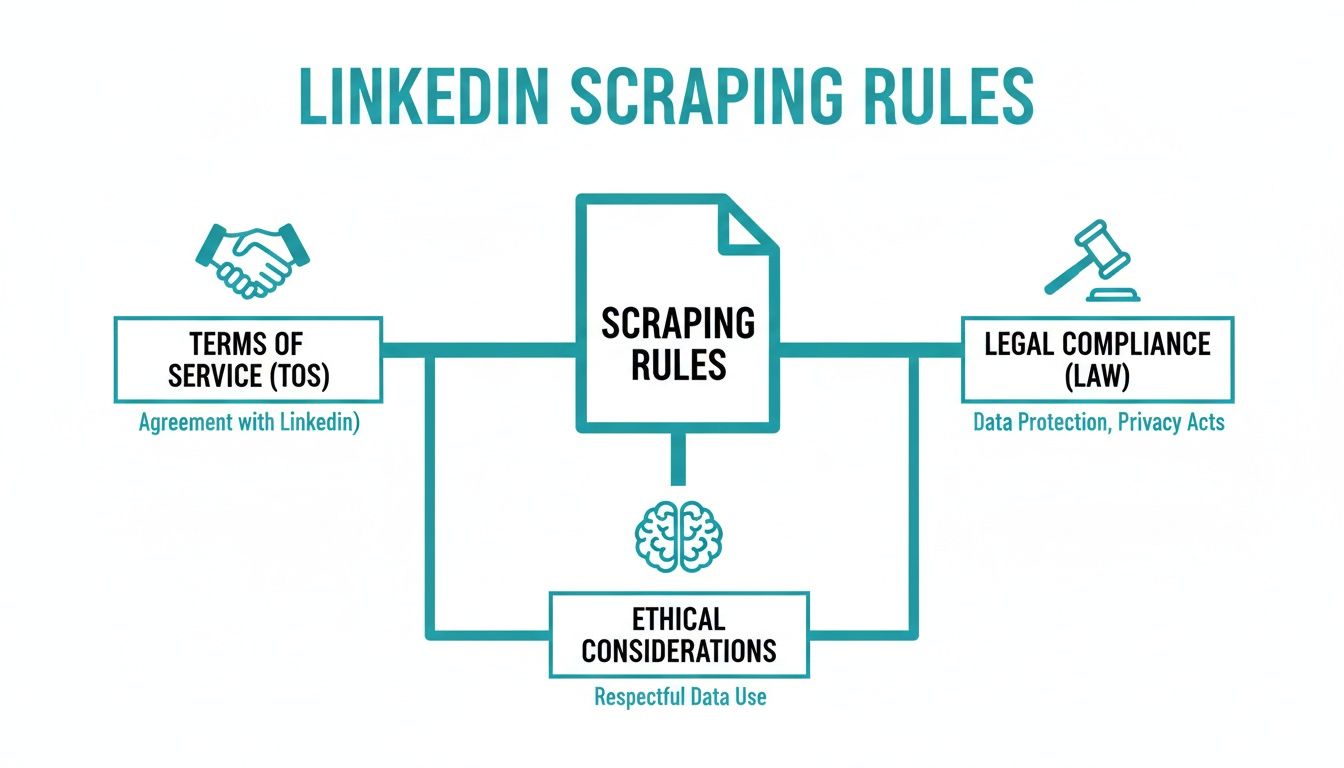

Navigating The Legal And Ethical Tightrope

Before your team even considers a technical solution for pulling prospect data from LinkedIn, you have to address the elephant in the room: is this even legal? The short answer is… it’s complicated. And the stakes are incredibly high. Getting this wrong isn't just about a broken script; it can lead to account bans, legal action, and significant damage to your brand’s reputation.

Let's be blunt. LinkedIn’s User Agreement explicitly forbids any kind of automated data collection. Full stop. When your team fires up a scraper, they are knowingly violating a contract they agreed to. While it might seem like a quick win, using these tools means operating in a constant state of breach.

The real risk here isn't a temporary slap on the wrist. For a sales professional, losing a LinkedIn account means losing a network they've spent years building. That's a career-impacting event that can grind your pipeline to a halt overnight.

The Landmark hiQ Labs Case

The legal landscape around data scraping was thrown into the spotlight by the long-running court battle between LinkedIn and hiQ Labs. The analytics firm, hiQ, was scraping public profile data, and LinkedIn fought back hard. The case bounced around the courts for years, creating widespread confusion.

In the end, the courts affirmed LinkedIn's right to protect its platform and enforce its own rules. The takeaway for sales leaders is crystal clear: you can’t hide behind a "it's public data" argument to justify scraping. LinkedIn has both the legal grounds and the technical muscle to shut down any activity it deems unauthorized.

Distinguishing Public From Private Data

Here's where many teams get tripped up—the line between public and private data. There’s a common and dangerous assumption that if you can see a profile without being connected, all that information is fair game for automation.

It’s not that simple.

- Publicly Viewable Data: This is the sliver of information a user could see without even logging into LinkedIn. Think name, headline, and not much else.

- Data Behind The Login: This is the goldmine for sales teams—detailed work history, skills, mutual connections, and all the rich data inside Sales Navigator. This is private data, accessible only to logged-in members.

By their very nature, scraping tools must log in to access this valuable data. The second a bot logs in on your behalf, you've crossed a clear line and are in direct violation of LinkedIn’s terms of service.

GDPR, CCPA, And The Global Compliance Minefield

Beyond LinkedIn’s rules, you have a whole other layer of complexity with global data privacy laws. If your team is scraping data and targeting prospects in Europe or California, you're immediately on the hook for GDPR and CCPA compliance.

Imagine this very real scenario: an SDR scrapes a list of 500 contacts, a few of whom are based in Germany. They dump that list into an email sequence without establishing "legitimate interest" as defined by GDPR. That single act could trigger a compliance audit and expose your company to massive fines.

These regulations demand a much higher standard for handling personal data. Just because you found a prospect's email doesn't give you the right to blast them with generic messages. For any sales leader trying to build a sustainable GTM engine, ignoring these rules is not an option.

Understanding the full picture is non-negotiable for a modern sales organization. You can learn more about our commitment to compliant data practices on our legal information page. Building a predictable pipeline means respecting both platform rules and international law. The smart move is to build your outbound engine on a foundation of verified, compliant data from the start and sidestep these risks entirely.

The Technical Reality: How Scraping Actually Works

If you're a RevOps manager or sales leader trying to build a scalable outbound engine, you've probably wondered about the "how" of getting prospect data from LinkedIn. It’s a tricky subject. You need to weigh speed, cost, and, most importantly, the very real risk of getting your accounts banned.

Choosing the wrong technical path can bring your pipeline to a screeching halt. The right one? That often requires serious technical heavy lifting. Let's dig into the common methods your team might be considering and what they actually mean for your business.

Comparison Of LinkedIn Scraping Methods

To make sense of the options, it helps to see them side-by-side. Each method comes with its own set of trade-offs, and what works for a small, one-off project is completely different from what you'd need for a scalable sales operation.

Ultimately, the table highlights a core conflict: the easier methods are the riskiest, while the "safer" DIY approaches are incredibly complex and expensive to maintain.

Headless Browsers: The "Human Mimicry" Approach

One popular method uses what are called headless browsers. Imagine a tool like Puppeteer or Selenium acting like an invisible robot. It opens a browser on a server, logs into LinkedIn, types in a search, scrolls down the page, and copies the data it finds—all without a graphical interface.

The big selling point is that this approach is designed to look more human, making it tougher for LinkedIn's basic bot detection to catch. But for a sales team that needs to move fast, this method is a real drag.

- It’s a resource hog. Running hundreds of these invisible browsers is slow and burns through server resources, which translates directly to higher costs.

- It’s painfully slow. Because the scraper has to render the entire webpage and act "human" by scrolling and clicking, it’s anything but fast. Scraping a list of 1,000 prospects can take hours, not minutes.

- It’s incredibly fragile. LinkedIn is constantly tweaking its website. A tiny change to the code can break your entire script, sending your engineers scrambling to fix it.

For a sales team, this means your lead flow is completely unpredictable. Your SDRs might get a fresh list one day, but the next day the scraper is broken and the pipeline dries up. It’s a reactive, high-maintenance way to operate.

This whole process is a tightrope walk. You have to balance what's technically possible with what's allowed.

As you can see, any technical approach needs to be viewed through the lens of LinkedIn's Terms of Service, the evolving legal landscape, and your own company's ethical compass.

Direct HTML Parsing With Python Libraries

Another path involves using Python libraries like BeautifulSoup or Scrapy. Instead of rendering the visual page, these tools download the raw HTML source code. They then sift through that code to find specific data points, like a name inside a specific tag or a job title in a certain section.

This is much faster and less resource-intensive than a headless browser because you're just working with text. The problem? It's also far easier for LinkedIn to spot and shut down. Without the full browser environment, your script screams "bot."

Let’s be clear: scraping LinkedIn is fundamentally harder than scraping most other websites. Their anti-bot tech is sophisticated, and the site's code is notoriously messy and always changing. What works today will almost certainly be broken tomorrow.

Building A Real Scraping Operation: The Architecture

If you're serious about building an in-house scraping system, the setup is far more involved than just a simple script. A truly robust operation requires a multi-layered architecture built from the ground up to avoid getting caught.

- Proxy Management: You can't fire off thousands of requests from one IP address without getting blocked instantly. A real operation needs a pool of rotating residential proxies. This makes your requests look like they're coming from different, real users in various locations, making your activity much harder to trace.

- Rate Limiting and Throttling: The key to survival is to not act like a machine. Your script has to be programmed to pause between actions, randomize wait times, and avoid predictable, robotic patterns. For example, it might view a profile, wait a random 5-10 seconds, scroll a bit, wait again, and then move on. It also needs to stay within daily limits to avoid triggering account restrictions.

- Session and Cookie Management: To get to the good stuff, your scraper has to be logged in. This means carefully managing session cookies (like li_at and JSESSIONID) to keep an authenticated session active. Constantly re-entering a username and password is a huge red flag for bots.

Building and maintaining this kind of system is a full-time engineering project. It’s a constant cat-and-mouse game that pulls your technical talent away from focusing on your actual product or business. Even with all that effort, a single algorithm update from LinkedIn can make your entire infrastructure worthless overnight.

Instead of building a fragile, high-risk scraping system, modern platforms like Willbe give you direct access to verified, compliant B2B data. This lets your team focus on what actually drives revenue—engaging prospects and closing deals—instead of managing brittle scripts. For anyone looking to build better-targeted lists without the technical headaches and risk, our guide on how to properly use advanced search on LinkedIn is a great place to start.

That Messy CSV File Won't Cut It: From Raw Data to Revenue-Ready Leads

Getting your hands on scraped data feels like the finish line, but it’s really just the start. The real challenge—and where so many teams stumble—begins the second you’re staring at a messy CSV file.

Raw, scraped data isn't an asset; it's a liability. It's almost always riddled with inconsistencies, outdated details, and missing information that make it completely useless for any kind of thoughtful outreach. This is the painful lesson many sales teams learn: scraping isn't a magic button. It's just the first, messy step in a much longer process of turning that raw material into something your SDRs can actually use.

From Jumbled Exports to Actionable Insights

Picture this: your SDR opens a "fresh" list of scraped prospects. One contact is listed as "VP Sales," another is "V.P. of Sales," and a third is "Vice President, Sales." We know it's the same job, but your CRM and automation tools see three totally different titles. Good luck trying to segment or personalize anything with that mess.

This is the chaos of raw data. To get it ready for your GTM motion, you need a disciplined workflow focused on three critical stages: cleaning, validation, and enrichment.

- Data Cleaning: This is the grunt work of standardizing job titles, fixing capitalization in names, and forcing everything into a consistent format. It’s the foundation for a reliable database and clean CRM.

- Data Validation: You have to ask the tough questions. Is the company still in business? Is that person still in the role? Validation is all about checking if the scraped info holds up in the real world today.

- Data Enrichment: Here's where the real value comes in. A scraped LinkedIn profile almost never includes a verified business email or a direct-dial phone number. Enrichment is the process of taking the name and company you scraped and using other data sources to find that crucial contact info.

For any sales leader, skipping these steps is a recipe for disaster. Blasting emails to unverified addresses will wreck your domain reputation and land your entire team in the spam folder. Trying to personalize outreach with incomplete data just makes your team look sloppy.

The Problem with a Patchwork Data Process

To get this done, many teams stitch together a fragile, expensive, and time-consuming tech stack. They use one tool for scraping data, another service to clean the spreadsheet, a third to find and verify emails, and then manually upload the final list into their CRM.

This fragmented workflow creates massive friction. Your RevOps team ends up playing firefighter, constantly fixing broken data syncs. Meanwhile, your SDRs are stuck waiting around for usable lists, which kills their momentum and wastes precious selling time.

The All-in-One Alternative

This exact problem is why modern GTM platforms like Willbe exist. We recognized that scraping data from LinkedIn is just one small, risky piece of a much bigger puzzle. Instead of forcing your team to manage fragmented tools and manual workflows, we’ve built the entire process into a single, cohesive platform.

Here’s how an all-in-one approach changes the game:

- You start with verified data. Willbe aggregates information from over 30 B2B databases, so you aren't gambling on a fragile scraper. The data is already clean, verified, and continuously updated.

- Enrichment is built right in. Forget needing a separate tool to find emails. As you build target account lists, contact information is automatically provided and verified, ensuring high deliverability from the start.

- It all syncs directly to your CRM. Leads and account data flow straight into your CRM without anyone needing to touch a CSV file. This eliminates manual errors and keeps your system of record clean and reliable.

An integrated approach like this gets rid of the bottlenecks that plague teams trying to build their own systems. Instead of spending 80% of their time on data prep and only 20% on outreach, your SDRs can flip that ratio. They get to do what you hired them for: engaging prospects and starting real conversations.

The Modern Alternative To Risky Scraping Workflows

Let's be honest—the DIY approach to scraping data from LinkedIn is a house of cards. You're constantly juggling proxies, patching up broken parsers after every platform update, and trying to make sense of messy CSV files. It’s a fragile, time-consuming game that pulls your focus away from revenue.

Every hour an engineer spends fixing a script is an hour they could have spent improving your product. Every day an SDR waits for a clean prospect list is a day of lost sales momentum. You're building a critical part of your revenue engine on a high-risk foundation that's always one step away from collapsing.

Instead of trying to duct-tape a scraping operation together, the smartest sales teams are taking a different approach. It's not about finding a better scraper; it's about making the whole concept of scraping obsolete.

From Managing Tech Stacks To Building Pipeline

The goal was never to become an expert in headless browsers or proxy rotation. The real mission has always been to find the right accounts, connect with the right people, and start conversations that turn into revenue. An all-in-one platform like Willbe brings your team's focus back to that mission.

Think about an SDR who used to burn half their day fighting with spreadsheets. Now, they can build a perfectly segmented, verified, and enriched list of prospects in just a few minutes, all inside one system.

This solves the biggest headaches of a fragmented GTM motion:

- No More Account Bans: You're pulling data from a compliant, aggregated source instead of running scripts that violate LinkedIn's terms of service.

- Instantly Clean Data: Forget about manually standardizing job titles or fixing weird formatting. The data comes out structured and ready for your CRM.

- No Broken Automations: Your lead flow is no longer at the mercy of a random LinkedIn code change. It just works.

The real win here isn't just saving time—it's what your team does with that time. When SDRs aren't bogged down by data grunt work, they can focus on what actually matters: personalized outreach and real conversations. That’s how you actually build a predictable pipeline.

The Power Of Aggregated, Verified Data

A true GTM platform does more than just replace your scraper; it replaces your entire collection of fragmented data tools. Rather than gambling on a single, risky source, platforms like Willbe bring together information from over 30+ B2B databases. This gives you a far richer, more accurate, and constantly updated data pool than any single scraper could ever hope to build.

By 2026, LinkedIn is projected to have over 1.3 billion users, making it an incredibly rich source of prospects. But that scale also means the risk of scrapers breaking is higher than ever. A recent analysis of 70,000 campaigns found that using AI-optimized, template-free outreach on high-quality data drove a 10% reply rate. The ROI is clear: better data leads to better results. For sales leaders, this confirms the need for platforms that deliver verified global contacts without the fragility of scraping. You can learn more about these LinkedIn usage trends and what they mean for modern sales.

A Faster, Safer Path To Predictable Revenue

This integrated model helps your team move faster and with more confidence. Instead of a clunky, multi-tool process, the workflow becomes seamless. You find your ideal customer profile, get their verified contact info, and sync it all to your CRM without ever leaving the platform.

Ultimately, this is about building a predictable revenue engine. When your data is reliable and your team is focused on selling, you create a scalable system for growth. You finally get to stop reacting to broken tools and start proactively building your pipeline.

Frequently Asked Questions About Scraping LinkedIn Data

Once you start looking into LinkedIn data extraction, the practical questions start piling up fast. We hear these all the time from sales and RevOps leaders who are deep in the trenches, so let's get straight to the answers.

What Is The Safest Number Of Profiles To Scrape Per Day?

Everyone wants a magic number, but there isn't one. LinkedIn’s detection systems are smart, and they're always changing. The goal in 2026 is to act like a human, not a bot—and humans don't suddenly view thousands of profiles in a day.

An active, real person might look at 80-100 profiles manually. Try to automate that at scale with a basic script, and you’ll trip an alarm instantly. The real secret is moderation and unpredictability. This is exactly why dedicated platforms are a safer bet; they use huge proxy pools and intelligent throttling to mimic natural user behavior far better than any DIY script ever could.

Can I Get Banned For Using A Chrome Extension Scraper?

Yes, absolutely. They seem easy and convenient, but Chrome extension scrapers are one of the most visible and riskiest methods out there.

Think about it: the extension runs inside your browser, using your own account, your own session, and your own IP address. It’s like leaving a massive digital footprint that points directly back to you. LinkedIn's rules are crystal clear about prohibiting this, and the consequences range from a temporary timeout to a permanent ban. Losing your account means losing your entire network and pipeline. It’s just not worth the gamble for any serious professional.

The bottom line is that these extensions can't hide what they're doing. They're automating actions from your personal account in plain sight, making it a question of when you get caught, not if.

Is It Better To Scrape Sales Navigator Than Regular LinkedIn?

That's a different beast entirely, and the stakes are much, much higher. While Sales Navigator has beautifully structured data for prospecting, LinkedIn watches its premium accounts like a hawk for any signs of automation. You are, after all, paying for a premium service, and they protect that ecosystem fiercely.

Getting your Sales Navigator account suspended isn't just an inconvenience; it's a direct financial loss and a major disruption to your business. The risk is significantly greater. The smart play is to use Sales Navigator for what it's designed for—in-depth research and targeted, manual outreach. For sourcing and enriching contacts at scale, you should rely on a compliant B2B data platform.

How Do I Turn Scraped Profile URLs Into Email Addresses?

This step is called enrichment, and it's where the whole DIY scraping process usually falls flat. A LinkedIn profile URL doesn't contain a person's email or phone number.

To get that contact info, you have to take the name and company you scraped and run it through a third-party enrichment service. These tools check their own massive databases to find a verified corporate email. This adds another layer of complexity, cost, and potential failure to an already fragile workflow.

It’s precisely why modern prospecting platforms build enrichment right into their system. You get a clean, ready-to-use list of leads without having to piece together multiple tools. The data is instantly actionable.

Recent analysis of over 70,000 campaigns revealed that AI-powered messages sent to high-quality leads can achieve 10% reply rates—a huge jump from the old spray-and-pray approach. For today's sales teams, the choice is clear: stop using risky, piecemeal scrapers and move to a unified platform that provides verified data and syncs directly with your CRM. It's the only path to predictable growth.

Instead of fighting with unreliable scrapers and juggling different data tools, top-performing sales teams are unifying their entire process. Willbe gives you compliant access to a constantly updated B2B contact database, replacing that whole shaky workflow with one powerful platform. You can find your ideal prospects, enrich their data on the spot, and launch personalized campaigns, all in one place.